The 95% Accurate Model That Fails to Change Behavior

Suppose one of the machine learning models recognizes that a person has 500 dollars in an unclaimed deposit with 95 percent certainty. The information is free from errors, the match is accurate, and the prediction is provided in terms of a personalized notification. The user reads it, interprets it, and takes no action.

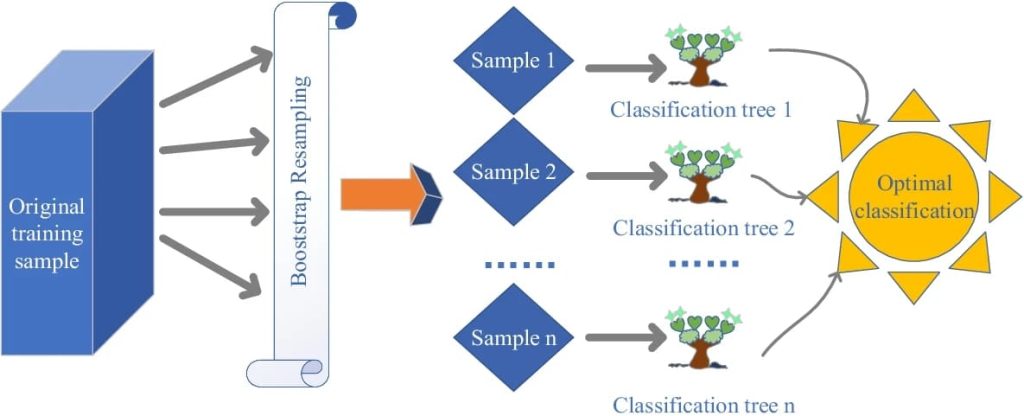

Bootstrap resampling trains multiple classification trees whose combined outputs produce a more stable and optimal prediction.

This is not a failure in modeling. It is a failure of translation of the prediction of behavior. On financial terms, machine learning tends to be great in opportunity recognition and weak in action. The difference between how models are supposed to work and how humans work in real life demonstrates a basic weakness of algorithmic systems. Motivation, emotion, and timing are not represented by accuracy. This paper looks at the issue of where machine learning works, where it always fails and why psychological reality is necessary in bridging the prediction behavior gap.

What Machine Learning Models Capture Well

Artificial intelligence has provided actual technical improvements in finance. Pattern recognition models are practical at recognising probable matches among persons and unclaimed properties among massive communal economic archives. Classification systems are very effective in fraud detection, as they identify anomalies in claim behavior.

Eligibility Prediction Model: Prediction of the chances of victories is made by predicting models using documentation completeness and past results. Regression models are used to determine fund values based on incomplete data, and risk-scoring models can determine the complexity of the claims and the duration they are likely to take to process.

Also check: Artificial Intelligence Vs Machine Learning Vs Data Scienc

Such systems are rated well on conventional assessment criteria. Recall and precision of asset matching keep on improving with an increase in datasets. Technically, such accomplishments are significant. The models can provide the right answers to the important questions, including who is eligible, the amount available, and the complexity of the recovery. The limitation is not the identification that models can make, but the motivation that they cannot stimulate.

The Behavioral Variables ML Models Consistently Miss

Human behavior presents variables, which are hard to formalize. The present bias (the preference to postpone action although it may turn out to be advantageous in the future) is unmeasurable. Eligibility can be predicted by a model but not the internal decision to defer action indefinitely.

Training data do not incorporate such emotional barriers as embarrassment over losing track of money. Lack of trust to institutions is hardly ever a feature (although it has a significant impact on follow-through). Cognitive load is diverse in different individuals and settings, which influences the capacity to perform multi-step processes.

The situation in life further destabilizes it. Intentions can be sabotaged overnight by stress, illness, or an abrupt change in work requirements. The motivation changes every day but in many cases models assume that users remain unchanged. Efforts to add psychological proxies to feature sets are not so intensive due to the contextual, transient and inaccessible nature of these factors.

The Procrastination Paradox in Prediction Models

The majority of models are constructed to give an idea of what will occur, but not when it will occur. They recognize who will act but have problems estimating timing. Time-series methods have been designed to predict periods of engagement, but procrastination is an interference with the predictable trends. The hyperbole discounting in which future rewards are highly undervalued is not well modelled in typical architectures.

Consequently, forecasts are not time-specific. The high probability user might either take action today or in a few months or not at all. Such an erratic behavior prompted interventions designed to take into consideration the psychology of procrastination, such as the use of Claim Notify, which has devised intervention systems that take into consideration the psychology of prediction with timely notifications and less-friction processes based on the fact that making a decision is not always caused by prediction.

Reinforcement learning has been discussed as a solution, although the delay in getting rewards cripples its applicability in human delay modeling.

Notification Optimization: When More Data Makes Things Worse

Increased personalization is not necessarily effective. It is a common finding of A/B testing that hyper-personalized notifications lower the action rates. Optimization is misled based on the engagement measures of opens or clicks, which are not always reliably correlated with completion.

Very specific communications can cause an uncanny valley or the feeling of protection instead of caring about people, as privacy might be threatened. The short-term engagement-specific optimization goals can be detrimental to trust and long-term behavioral change, creating a tension between the model goals and the real-world behavior.

The Context Window Problem: What Models Can’t See

Machine learning models are limited in scope. They are unable to perceive life events that redesign the decision capacity. Individual-level data hardly reflects relationship dynamics, cultural norms, and influences of the community. Scheduling sensitivities, stress or emotional bandwidth are not visible.

This makes predictions single-shot evaluations and not global evaluations. Modelling decisions on finances are set within a wider life context, but in many cases, models will presume independence. This discrepancy is why proper predictions often do not lead to action.

Hybrid Approaches: Combining ML with Behavioral Science

What is needed is bridging the gap with hybrid systems. It is possible to use behavioral research to inform feature engineering with the aim of determining the points of friction, rather than eligibility signals. Psychology should control the time of intervention, and not pure engagement optimization.

Human-in-the-loop methods provide judgment in areas where models fail, especially communication and timing. Behavioral interventions that are experimented with and prediction accuracy give a more meaningful assessment. The best systems are those that have machine learning to identify and behavioral science to activate.

Toward Behaviorally Informed Machine Learning

Machine learning is effective in predicting financial opportunities and ineffective in predicting human follow-through. There is no fidelity equivalent to effect in case psychology is neglected. Behavioral irrationality is not a special case. It is a core constraint.

The future of financial AI is in the cooperation of both data scientists and behavioral researchers. The prediction systems should have emotion, trust and timing. Once machine learning is structured around the way people act in the real world, then the distance between insight and action can start to be bridged.